Why the "Build Fast" Mindset is Creating a Massive Governance Risk Compliance Gap in 2026

The tension in the modern enterprise is reaching a breaking point. On one side, you have world-class developers—engineers who are masterful at integrating LLMs, orchestrating APIs, and scaling infrastructure. On the other side, you have the immovable wall of governance risk compliance.

We are currently witnessing a dangerous trend: leaders are asking their engineering teams to “hard-code” compliance into AI agents. This is a fundamental category error. Your developers are brilliant at building technology, but they didn’t go to school to understand the nuances of the OCC, HIPAA, or the evolving AI Act.

To a developer, a “successful” AI interaction is one with low latency and high user engagement. To a compliance officer, a successful interaction is one that is 100% deterministic and produces a defensible audit trail. These two goals are often in direct opposition.

The Fluency Gap: Speaking Two Different Languages

Engineering is the language of optimization. Governance risk compliance is the language of restriction. When you force a developer to own the GRC layer, you aren’t just slowing down your roadmap—you’re creating a “Hallucination Tax” that your organization will have to pay for years.

Developers speak in Python and Go; regulators speak in liability and evidence. In the age of Agentic AI, the software can now make decisions on its own. It can waive fees, approve applications, or disclose data without a human ever touching the keyboard. If your developers are the ones “guessing” the guardrails, your organization is accumulating unmeasured exposure at runtime.

The "IT Roadmap" Fallacy: Where You Can and Can’t Cut Corners

In a standard IT roadmap, there are plenty of places to cut corners to hit a deadline. You can ship with a “good enough” UI. You can delay a secondary feature. You can fix bugs in the next sprint. Your customers are often lenient, especially if the core value is there.

However, your regulators are not your customers. They do not accept “v2.0” as an excuse for a compliance failure today. A regulator doesn’t care about your agile methodology or your sprint velocity. If your AI handles a transaction without the proper governance risk compliance protocols in place, the fines are literal, not figurative. In 2026, we’ve seen that a “helpful” AI that violates policy is more expensive than no AI at all.

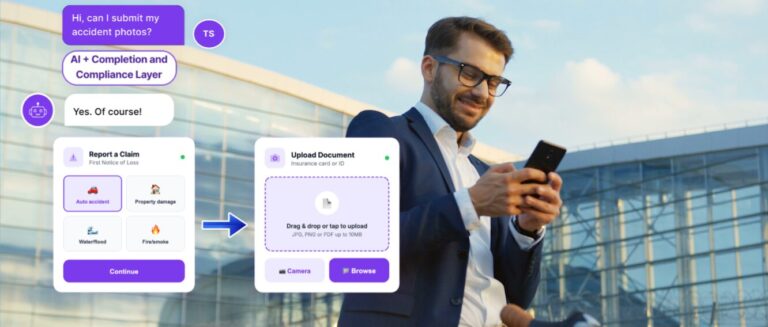

Let Developers Build; Let Systems Enforce

The solution isn’t to put more burden on your developers. The solution is to decouple the “Intelligence” from the “Enforcement.” Let your developers do what they do best: build innovative, fast, and scalable technology. But you must find a system—a Completion Layer—that understands the language of compliance natively.

As we discussed in our previous look at The Hallucination Tax, the failure of AI often happens in the “unmeasured middle.” By implementing a deterministic layer that sits on top of your developer’s code, you ensure that every AI decision is governed, every outcome is auditable, and your engineers never have to play “amateur lawyer.”

What’s Your Number?

If you are currently relying on your dev team to be your last line of defense for governance risk compliance, you are carrying millions in unmeasured risk. It’s time to find out exactly how much.

Take the Free AI Risk Assessment at codn.callvu.com to see where your developer’s code ends and your compliance exposure begins.