Bridging the Gap Between Agentic Innovation and Strict Utilities Compliance Standards

The tension within modern utility enterprises has reached a critical failure point. On one side, CTOs are pushing for rapid AI deployment to handle soaring call volumes and complex field dispatches. On the other side, the immovable wall of utilities compliance – enforced by the likes of FERC, NERC, and state-level public utility commissions – demands a level of precision that standard generative AI simply cannot guarantee.

As we recently explored in our blog, The Architecture of AI Auditability Beyond Chat Transcripts, the era of “AI experimentation” is over. We have entered the era of Agentic Accountability. In this new landscape, a “successful” AI interaction isn’t just one that satisfies the customer; it is one that is 100% deterministic and leaves a defensible audit trail.

The Completion Gap: Where Intent Meets Reality

Most utility companies are currently making a fundamental category error: they are asking AI models to “manage” compliance. This is a recipe for disaster. Generative AI is probabilistic – it “guesses” the next best word or action based on patterns. However, utilities compliance is binary. Either a safety protocol was followed, or it wasn’t. Either a customer was read their regulatory disclosures, or they weren’t.

When you rely on an AI agent to handle a service connection or a billing dispute without a dedicated Completion and Compliance Layer, you are effectively paying a “Hallucination Tax.” You are betting that the AI won’t skip a mandatory verification step or hallucinate a policy that doesn’t exist. In the high-stakes world of public infrastructure, that is a bet no executive should take.

Key Industry Risk: In the utilities sector, a “helpful” AI that ignores a safety warning to resolve a ticket faster isn’t an efficiency gain – it’s a massive legal liability.

Decoupling Intelligence from Enforcement

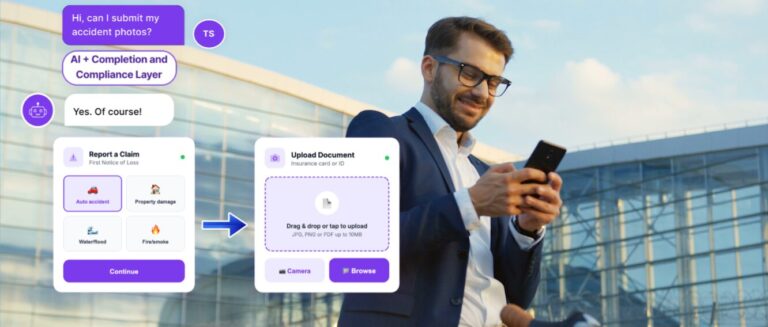

The solution isn’t to slow down your AI roadmap; it’s to change the architecture. You must decouple “Intelligence” (the AI’s ability to understand the customer) from “Enforcement” (the system’s ability to execute a process correctly).

By implementing a dedicated utilities compliance framework at the execution level, you allow your AI to be “conversational” while your backend remains “deterministic.” This Completion Layer acts as a digital governor. It ensures that:

- Mandatory Gates are Locked: The AI cannot proceed to “Step B” until “Step A” (like a KYC check or a safety disclosure) is 100% verified.

- Structured Data is Captured: Instead of messy chat logs, you get clean, auditable data that maps directly to regulatory requirements.

- Field Operations are Verified: For field workers, the layer ensures that every “job completion” is backed by the specific evidence (photos, signatures, timestamped GPS data) required for safety audits.

The Risk of the "Unmeasured Middle"

Without this layer, your organization is accumulating unmeasured exposure at runtime. If your AI handles a transaction – like waiving a late fee or scheduling an emergency repair – without the proper utilities compliance protocols, the resulting fines are literal, not figurative. In 2026, we’ve seen that a “helpful” AI that violates policy is far more expensive than having no AI at all.

Your regulators are not your customers. They don’t care about your “sprint velocity” or your “innovative CX.” They care about adherence to the law. By placing a Completion Layer between your AI and your core systems, you ensure that every decision is governed and every outcome is auditable.

What is Your Exposure?

If you are currently relying on your development team to be your last line of defense for regulatory risk, you are carrying millions in unmeasured liability. It is time to find out exactly where your developer’s code ends and your compliance exposure begins.

Quantify your risk in less than 60 seconds. Use our Free AI Risk Estimator to see how your current AI strategy stacks up against 2026 standards: